Let's admit it: the BI Era is Ending

March 11, 2026

Share

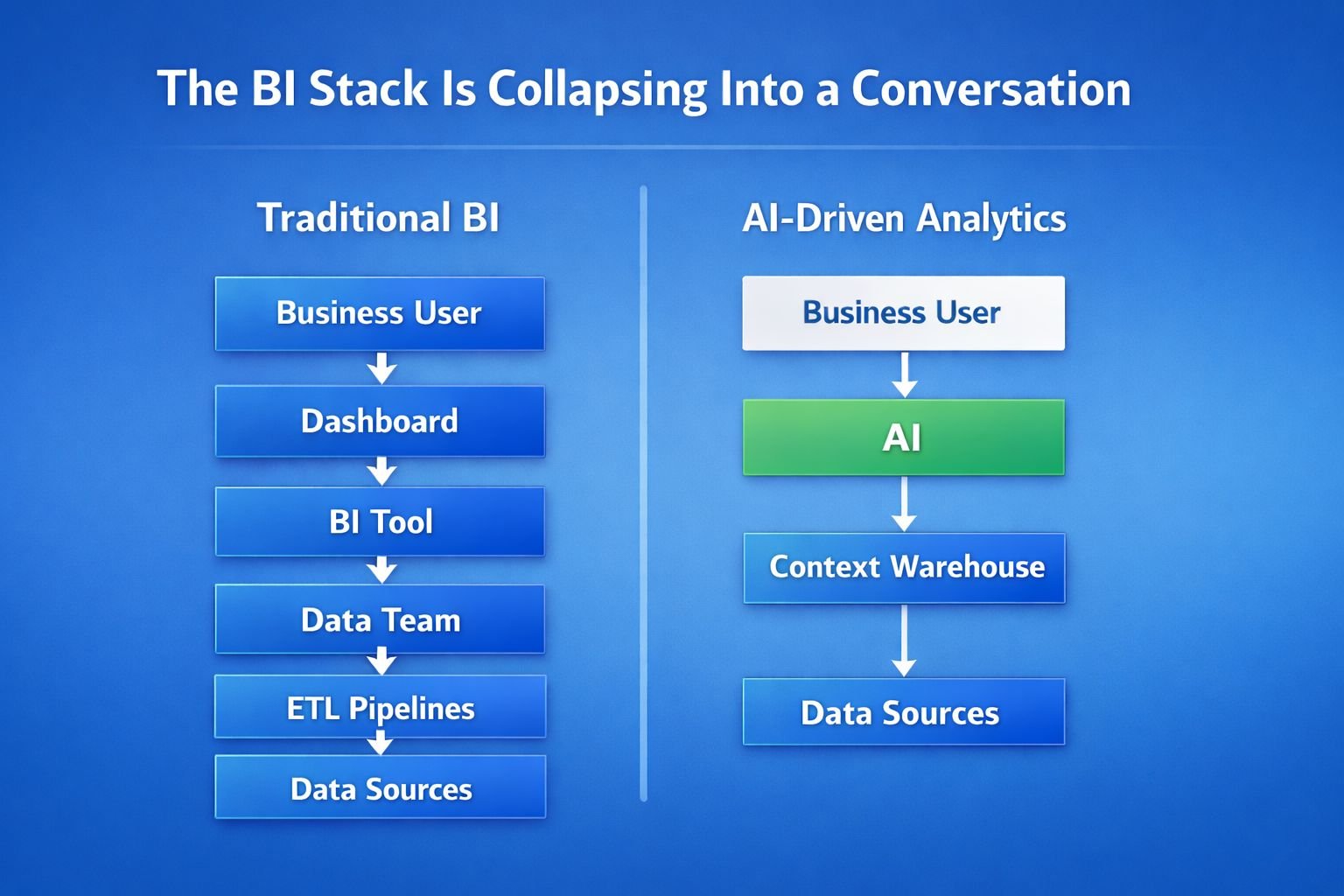

We built business intelligence for a world where pulling data was hard. Oh and it required a group of sorcerers, we called a data team, to do these mysterious incantations called ETL and SQL.

Dashboards, query layers, and semantic models existed to make data accessible to people who couldn’t speak the language of data. The whole stack—from data warehouses to BI tools like Tableau, Looker, and Mode—was designed around a language barrier. As such, accessing data required specialists who spoke these foreign (almost magical) languages.

Those wand-wielding specialists built all these layers:

- data warehouses

- data teams

- BI tools

- dashboards

Each one existed to translate data into something the rest of us could understand.

But AI is rapidly commoditizing the mechanics of analytics. Charts are becoming trivial. SQL is becoming trivial. Even dashboards can now be generated on demand.

The interface of BI is collapsing into a conversation: you now ask a question, you get an answer, then you ask the next question.

But that was never the hardest problem. The real bottleneck was never accessing the data. It’s understanding what the data actually means, because anyone could piece together a magical incantation that returned garbage data, or no data at all .

Moving Towards Commoditization

The first parts of analytics to get commoditized are the obvious ones: chart creation and text-to-SQL. Any LLM can do that pretty well now. ChatGPT can write queries if you tell them what you want, and Claude can make pretty graphs.

So I argue that if generating the chart or query is easy, the traditional BI interface starts to break down.

Analysts pre-built our answers in dashboards because querying data was expensive, but when querying becomes cheap, the need for pre-built dashboards starts to fade.

Instead of navigating a dashboard, people can simply ask:

- “What changed in pipeline this week?”

- “Why did churn spike in March?”

- “Which customers are most likely to expand?”

And the system generates the analysis dynamically. That shift alone will commoditize large parts of traditional BI. But even that isn’t the most interesting change.

Because the real bottleneck in analytics isn’t the charts or the queries. It’s the meaning behind the numbers.

The Real Problem is Institutional Knowledge

Data problems are rarely technical. They are organizational, “people problems” if you will.

Consider a common scenario, that even happened recently to a well-known decacorn.

At the company’s sales kickoff, two executives present metrics about the health of the business.

The CRO presents a slide showing net revenue retention. Then the VP of RevOps presents their own NRR number.

The numbers don’t match.

Suddenly the entire room is confused.

Here’s the rub: neither number is “wrong.” Both are calculated correctly.

They just use slightly different definitions. One includes reactivation revenue. The other excludes it.

The problem wasn’t the dashboard. The problem was that the organization didn’t have a shared definition of the metric, and this happens everywhere.

The company defines “active customer” three different ways.

Some PM says, “we stopped using that column three years ago,” which means a product metric silently changes definition after a data migration.

So the challenge isn’t querying the data. The challenge is remembering how the business interprets it.

Yes, We are “Post-Ontology”

Historically, BI tried to solve this with fancy spellbooks called ontologies and semantic layers.

The idea was pretty simple:

- Define the business upfront.

- Define every metric.

- Define how every table relates to every other table.

- Then enforce those definitions across dashboards.

In theory, that creates consistency. In practice, it rarely works. Because real companies don’t understand every nook and cranny of their business upfront. They learn it over time.

Case Study: Foot Locker

Consider a retail company trying to understand how its marketplace strategy interacts with brand-owned online stores.

The question seems straightforward: how do you compete with brands that also sell directly to consumers? But answering that question requires pulling context from many teams from the retail strategy team to the brand partnerships team to the marketing organization. “Oh and did someone ping the security and governance team too?”

Each group has slightly different definitions for customers, transactions, attribution, and engagement.

Before you can even run the analysis, you have to reconcile all of those perspectives.

At Foot Locker, this became so painful that the analytics organization eventually moved under the COO—simply because that’s where the most institutional knowledge lives.

The bottleneck wasn’t running the analysis. It’s figuring out what the business actually means by the data.

Trying to design a perfect ontology before those conversations happen is like mapping a mountain before anyone has walked the trails.

I assert the ontology era is over, courtesy of LLMs. Not because ontologies aren’t useful. But because designed ontologies don’t match how organizations actually learn.

Most companies don’t sit down and define their business perfectly on day one. Instead, institutional knowledge emerges gradually and informally in all sorts of places:

- in Slack conversations

- in analyst queries

- in meetings

- in ad-hoc explanations

But the systems we built for BI assume that knowledge should be designed upfront. That’s why ontology projects often take months and why they frequently become outdated the moment they are finished.

AI as the Scribe of the Business

AI introduces a completely different model. Instead of designing the ontology upfront, AI can observe how the organization actually operates. It can watch what questions people ask, what queries analysts write, how metrics get debated, which tables actually get used, and how analysts explain the numbers.

Then, an agent can connect all that together. From there, it can construct a living map of how the business understands itself.

Imagine a company trying to identify its most engaged customers.

One team believes engagement means opening marketing emails and clicking links. Another team believes engagement means using the product frequently.

Both perspectives make sense, but they produce different answers when you try to analyze the data.

Eventually the company has to decide how it defines engagement. Once that definition exists, the rest of the organization can rely on it.

That process—asking questions, debating definitions, and refining them over time—is how institutional knowledge actually forms.

AI doesn’t need to invent those definitions. It simply needs to capture them.

The Future of BI

BI tools historically answered one question:

“What happened?”

But the next generation of systems will capture how the company defines success, how teams reason about metrics, how analysts interpret data, and how decisions get made.

The system of record won’t just be dashboards; it will be the shared understanding behind them. Perhaps we call it a “system of understanding?”

The hard part of analytics now will be remembering what the data means and documenting how we use it in real-time as we truly attain that self-serve ideal.

Organizations constantly lose that context when analysts leave, teams reorganize, or systems change. Now, AI won’t replace analysts, but it might replace the institutional amnesia inside organizations.